Lab homepage open!

Thanks to many people who have contributed to this homepage, with ideas/suggestion or actual work.

Also feel free to give any new suggestions you may have about this homepage. 🙂

Thanks to many people who have contributed to this homepage, with ideas/suggestion or actual work.

Also feel free to give any new suggestions you may have about this homepage. 🙂

We are actively seeking motivated graduate students to join the very creative team.

Contact us if interested.

Sep. 8, Introduction to Intellectual Property and patent attorney

Speaker: Yongjoon Jeon, Taebaek Patent & Law Firm

Time and Place: 16:00-17:00, E104

Oct. 27, Engineers, Dream and Dream Big!

Speaker: Prof. Jongwon Kim, SNU

Time and Place: 16:00-17:00, E104

•HTM(Hierarchical Temporal Memory) : Not programmed & not different algorithms for different problem.

1) Discover cause

– Find relationships at inputs.

– Possible cause is called “belief”.

2) Infer causes of novel input

– Inference : Similar to pattern recognition

– Ambiguous -> Flat.

– HTMs handle novel input both during inference & training

3) Make predictions

– Each node store sequences of patterns

+ current input -> Predict what would happen next.

4) Direct behavior : Interact with world.

How do HTMs discover and infer causes?

Why is a hierarchy important?

1) Shared representations lead to generalization and storage efficiency.

2) The hierarchy of HTM matches the spatial and temporal hierarchy of the real world.

3) Belief propagation ensures all nodes quickly reach the best mutually compatible beliefs.

– Belief propagation calculates the marginal distribution for

each unobserved node, conditional on any observed nodes.

4) Hierarchical representation affords mechanism for attention

How does each node discover and infer causes?

Assigning causes.

Most common sequence of pattern are assigned.

Assigned causes are used for prediction, behavior etc.

Why is time necessary to learn?

•Pooling(many-one) method

– Overlap

– Learning of sequence : HTM uses this way.

PCNN (Pulse-Coded Neural Networks) : A modeled network system which is for the evaluation of a biology-oriented image processing, usually performed on general-purpose computers, e. g. PCs or workstations.

SNNs(Spiking Neural Networks) : A neural network model. In addition to neuronal and synaptic state, SNNs also incorporate the concept of time into their operating model.

SEE(Spiking Neural Network Emulation Engine) : A field-programmable gate array(FPAG) based emulation engine for spiking neurons and adaptive synaptic weights is presented, that tackles bottle-neck problem by providing a distributed memory architecture and a high bandwidth to the weight memory.

PCNN – Operated by PC & workstation -> Time consuming

– Because of bottle-neck : sequential access weight memory

FPGA SEE – Distribute memory

– High bandwidth weight memory

– separating calculation neuron states & network topology

SNNs or PCNNs 1. Reproduce spike or pulse.

2. Perform some problems such as vision tasks.

Problem of Large PCNNs 1. Calculation steps.

2. Communication resources.

3. Load balancing.

4. Storage capacity.

5. Memory bandwidth.

Non-leaky integrate-and-fire neuron(IFN)

Overview of the SEE architecture

A. Simulation control(PPC2) 1. Configuration of network

2. Monitoring of network parameter.

3. Administration of event-list.

– Two event-lists : DEL(Dynamic Event-List) includes all excited neurons that receive

a spike or an external input current.

FEL(Fire Event-List) stores all firing neurons that are in a spike

sending state and the corresponding time values when the

neuron enters the spike receiving state again.

B. Network Topology Computation(NTC)

– Topology-vector-phase : The presynaptic activity is determined for each excited neuron.

– Topology-update-phase : The tag-fields are updated according to occurred spike start-events or spike stop-events.

C. Neuron State Computation(NSC)

– Neuron-spike-phase : It is determined if before the next spike stop-event an excited

neuron will start to fire.

– Neuron –update-phase

– Bulirsch_Stoer method of integration.(MMID, PZEXTR)

– Modified-midpoint integration(MMID)

– Polynomial extrapolation(PZEXTR)

Performance analysis

| n | NNEURON | NBSSTEP | TSW | TSEE | FSPEED-UP |

| 4 | 32X32 | 98717 | 1405 s | 45 s | 31.2 |

| 48X48 | 222365 | 6527 s | 226 s | 28.9 | |

| 64X64 | 420299 | 22620 s | 758 s | 29.8 | |

| 80X80 | 721463 | 65277 s | 2032 s | 32.1 | |

| 96X96 | 926458 | 119109 s | 3757 s | 31.7 | |

| 8 | 32X32 | 107276 | 1990 s | 63 s | 31.6 |

| 48X48 | 235863 | 7263 s | 312 s | 23.3 | |

| 64X64 | 413861 | 31548 s | 972 s | 32.5 | |

| 80X80 | 645694 | 80378 s | 2370 s | 33.9 | |

| 96X96 | 967572 | 142834 s | 5113 s | 29.9 |

– Reference

Emulation Engine for Spiking Neurons and Adaptive Synaptic Weights by H. H. Hellmich, M. Geike, P. Griep, P. Mahr, M. Rafanelli and H. Klar.

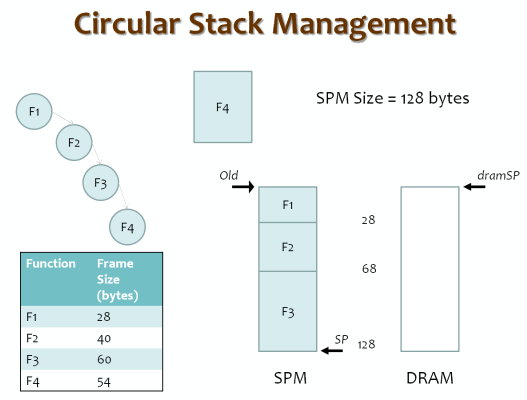

A dynamic scratch pad memory (SPM) management scheme for program stack data with the objective of processor power reduction is presented. Basic technique does not need the SPM size at compile time, does not mandate any hardware changes, does not need profile information, and seamlessly integrates support for recursive functions. Stack frames are managed using a software SPM manager, integrated into the application binary, and shows average energy savings of 32% along with a performance improvement of 13%, on benchmarks from MiBench. SPM management can be further optimized and made pointer-safe, by knowing the SPM size.

Read the full paper: “A Software-Only Solution to Use Scratch Pads for Stack Data,” by Aviral Shrivastava, Arun Kannan, and Jongeun Lee*, published in IEEE Transactions on CAD, vol. 28, no. 11, pp. 1719-1727, November 2009.

Honestly i’m a little confused. So I’m trying things out. My questions:

Done it at last.

Contributors: Youngmoon Um (right) and Mooyoung Lee (left), both are majoring in ECE at UNIST.

Jongeun Lee joined Ulsan National Institute of Science and Technology (UNIST) in August of 2009 and is Associate Professor of Electrical and Computer Engineering. Dr. Lee received his B.S. (1997) and M.S. (1999) in Electrical Engineering, and his Ph.D. (2004) in Electrical Engineering and Computer Science all from Seoul National University.

Prior to joining UNIST, Dr. Lee was a Senior Researcher at Samsung Electronics SoC Research Center (2004.1-2007.10) and a Postdoctoral Research Associate at the Arizona State University (2007.11-2009.8). Dr. Lee is a recipient of Postdoctoral Research Fellowship from KRF (Korea Research Foundation) in 2007.

Dr. Lee has published more than 70 papers in refereed journals and conferences as well as a book chapter and several patents. His research interest includes deep learning processors, reconfigurable processor, and compilation for low power, reliability, and multi-core processors.

Two U-SURF students visit the HPC lab at UNIST for internship. During the four weeks period, students will be participating in graduate research projects, while staying in the dormitory with other U-SURF students. There are other activities including a one-day MT provided to all U-SURF participants.